One thing we’ve found valuable at Passport is treating LLM (large language model) selection as a systems design problem, not a branding decision.

If there’s one mistake I see teams make right now, it’s over-indexing on model quality and under-investing in system design.

In practice, that approach breaks down quickly, especially in logistics and ecommerce environments — where a single “workflow” might involve carrier APIs, customs signals, warehouse events, and customer notifications all stitched together — data is messy, and latency and cost actually matter.

Instead, we think about LLMs the same way we think about any distributed system: different components should do different jobs, and each job should be handled by the component best suited for it.

That mindset has become foundational to how we build logistics AI at Passport.

Why “One Model to Rule Them All” Doesn’t Work

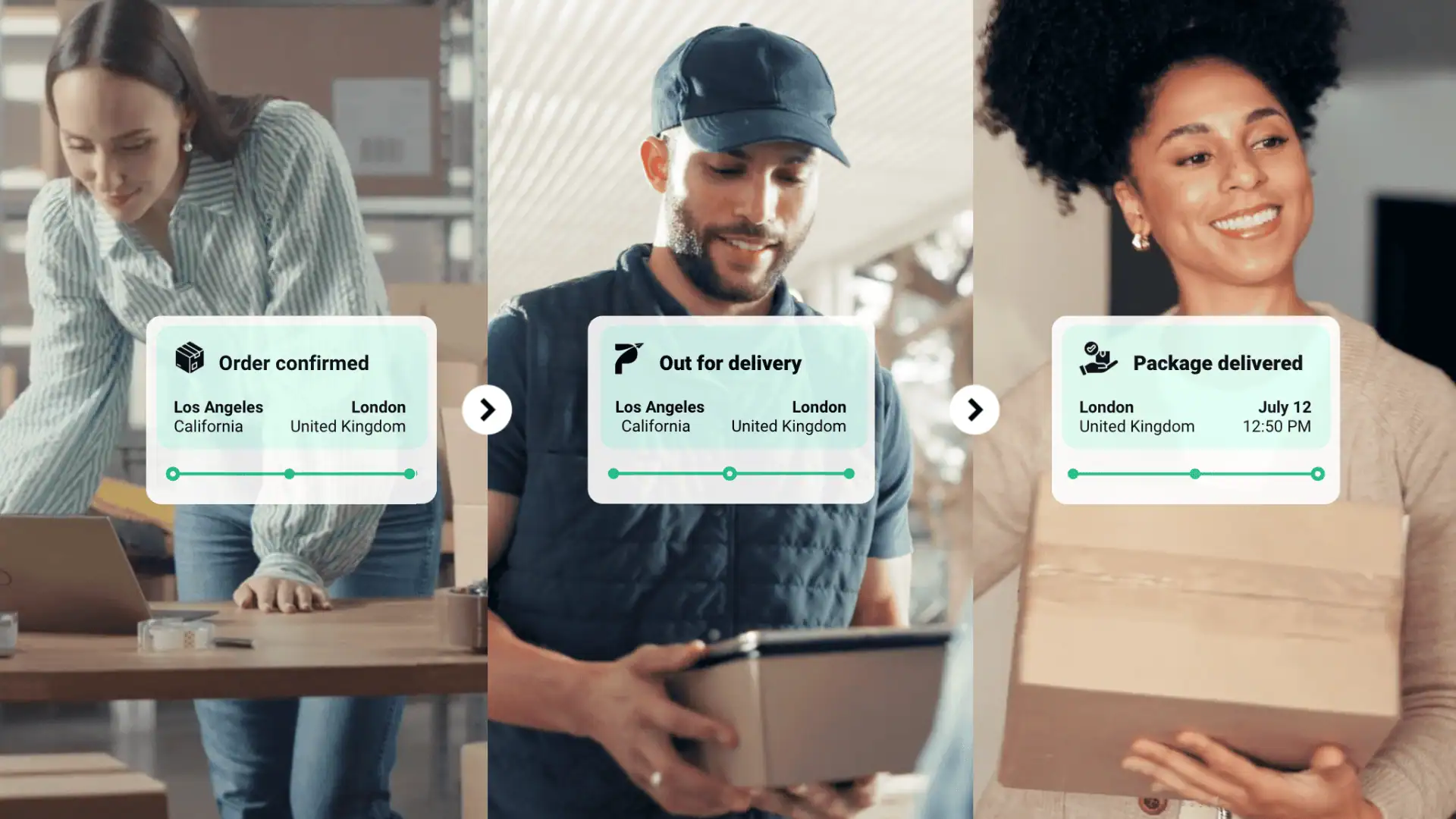

In ecommerce AI — especially in the international logistics space that we play in — we are dealing with a wide range of tasks:

- Structured data retrieval (orders, shipments, tracking events)

- Semi-structured logs (carrier updates, customs signals)

- Unstructured reasoning (what actually happened to a package?)

- Actionable outputs (what should we do next?)

These are not the same problem. Not even close.

They don’t require the same capabilities, and they definitely shouldn’t all be handled by the same model.

At the same time, we’re operating in an environment where token costs fluctuate and are increasingly constrained, model performance can degrade under heavy load, and lower-tier models are rapidly improving… and getting significantly cheaper (often 5–8x less expensive than frontier models).

So the question becomes: why pay for a reasoning-heavy model when the task is just structured retrieval?

That’s where a systems approach to ecommerce AI starts to pay off.

A Practical Example: Logistics AI for Observability

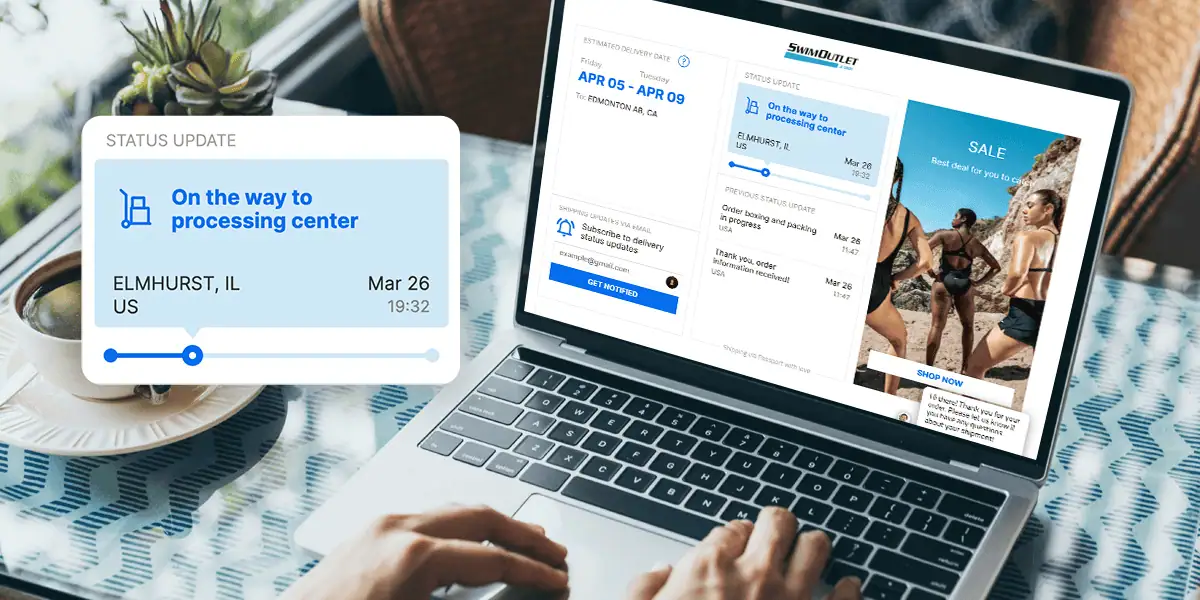

One place this shows up clearly is in how we handle log and telemetry analysis across our platform.

For log and telemetry retrieval from server logs, we use GLM-5-Turbo in the collection stage. That stage is mostly about forming structured queries, retrieving relevant records, and extracting the right evidence slice quickly and cheaply.

This is not a reasoning problem. It’s a precision and efficiency problem.

We ran a large amount of side-by-side comparisons, and found that even though GLM-5-Turbo has a smaller context window compared to others, but given the right structure, it’s fast, low-risk, and it gets the job done.

Once the evidence is gathered, we pass the output to GPT-5.4 for the reasoning stage: interpreting noisy logs, correlating signals across systems, and generating a human-usable explanation of what actually happened.

That’s the part that actually requires reasoning. It has a higher risk profile compared to the previous stage, so it benefits from a more capable (and more expensive) model.

By separating those stages, we avoid wasting high-value compute on low-value tasks, and we get better results.

Applying the Same Pattern to Ecommerce Engineering

We use a similar division for software engineering workflows.

For source-code reading, implementation analysis, and fix suggestions, we use Opus-4.6 (soon to be Opus-4.7). That model performs well when the task requires navigating code structure, understanding intent across files, and proposing technically coherent remediations rather than just summarizing snippets.

Again, the key is matching the model to the shape of the problem:

- Retrieval → fast, cheap, structured

- Interpretation → where you actually spend on reasoning

- Proposal generation → somewhere in between, but needs to be clean and reliable

When you align those correctly, the system starts to feel less like “AI glued onto a workflow” and more like a purpose-built AI-native system.

The Architecture Behind Effective Ecommerce AI

In practice, our architecture separates three core responsibilities into different subagents:

1. Retrieval

Focused on gathering the right data as efficiently as possible:

- Query generation

- Index retrieval (server logs, databases, APIs)

- Evidence extraction

This layer benefits from speed, cost efficiency, and structured output — not deep reasoning.

2. Interpretation

This is where the system actually “thinks”:

- Correlating signals across systems

- Handling ambiguity and noise

- Forming a coherent understanding of events

This is where higher-tier LLMs earn their keep.

3. Proposal Generation

Turning insight into action:

- Suggested fixes

- Customer-facing explanations

- Internal recommendations

This layer needs clarity, structure, and reliability more than raw intelligence.

What This Means for Logistics and Ecommerce Teams

If you’re building with AI in logistics or ecommerce, the takeaway isn’t “use these exact models.”

It’s this: Stop thinking about LLMs as a single decision. Start thinking in terms of systems.

A few practical implications:

- Design for specialization. Break workflows into distinct stages with clear responsibilities.

- Optimize for cost and performance together. The cheapest model that does the job well is usually the right choice.

- Expect model volatility. Performance, pricing, and availability will keep shifting — your architecture should be flexible enough to adapt.

- Measure outcomes, not outputs. The goal isn’t to use the most advanced model — it’s to produce the most reliable result.

The Bigger Shift: From Tools to Systems

What’s changing in logistics AI and ecommerce AI isn’t just the models, but it’s how we use them.

We’re moving away from “AI as a tool” and toward AI as infrastructure.

That shift requires a different mindset with less emphasis on model comparisons and demos, and more focus on orchestration and system design and on production reliability.

At Passport, this approach has led to better cost/performance efficiency, stronger specialization, and more reliable results than asking a single model to handle an entire pipeline end to end.

And more importantly, it’s allowed us to build AI systems that actually hold up under real-world ecommerce complexity, not just in controlled environments.

If you’re thinking about how to apply logistics AI or ecommerce AI in your own stack, start by asking a simple question:

What are the distinct jobs your system needs to do, and which model is actually best suited for each one?

That’s where the real leverage is.

Authored by Tony Chen

CTO | Passport

Tony Chen, a seasoned entrepreneur and tech luminary, navigates the realms of software, hardware, and data. Formerly an Engagement Manager at McKinsey, his strategic counsel shaped Fortune 100 companies. As co-founder of Pantry, he revolutionized fresh food retail. Tony’s engineering prowess was honed at Ericsson, Qualcomm, and Intel. Armed with a BS in EECS from UC Berkeley, he’s a trailblazer in every venture, fusing business acumen with technological finesse.